Top 6 No-Code Cloud Platforms for Web Scraping in 2026: Comprehensive Review and Comparison

Table of contents

- Introduction

- Methodology of the ranking

- Criteria for selection and comparison

- #1. apify — best balance of power, anti-bot resistance, and scalability

- #2. octoparse — easiest visual builder for beginners

- #3. browse ai — fastest start and convenient integrations with sheets

- #4. phantombuster — best for social media and lead generation

- #5. diffbot — best for structured entities and semantic search

- #6. parsehub — reliable visual parser for basic and intermediate tasks

- Comparison table

- Alternatives not in the top

- Recommendations for selection

- Faq

- Conclusion

Introduction

No-code web scraping is experiencing a new surge: marketers, analysts, and entrepreneurs need quick, reliable, and compliant ways to gather data without relying on a development team. From 2024 to 2026, no-code tools have evolved to allow complex data collection routines from marketplaces, SERPs, and social media with just one click, and results can be instantly sent to Google Sheets, CRM, or BI.

Who benefits most from this? Those who regularly monitor prices and availability on marketplaces (Wildberries, Ozon, Amazon), analyze search results and competitors, aggregate data from social media for lead generation and demand analysis, and update catalogs and product listings. No-code services enable fast validation of hypotheses without distracting development from core tasks.

In this ranking, we've compared the top six no-code cloud platforms for web scraping: Apify, Octoparse, ParseHub, PhantomBuster, Diffbot, and Browse AI. We've evaluated them based on ease of use, proxy support, anti-bot defenses, data types extracted, export capabilities, pricing, limits, speed, and scheduling. According to our criteria, the frontrunners are: Apify for scalability and mature infrastructure, Octoparse for its intuitive visual builder, Browse AI for rapid setup and integrations, PhantomBuster for social media handling, Diffbot for ready-made structured entities and semantic search, and ParseHub as a solid basic tool with a long history.

The relevance of prices and specifications is confirmed as of January 2026 (some plans might have changed, so check information within your accounts). We focused on objectivity and utility for decision-making; each service has its strengths and limitations, and we’ll show which suits whom best.

Methodology of the Ranking

To make the ranking transparent and reproducible, we used a mixed approach: functional testing of typical tasks, studying documentation and public pricing pages, analyzing user reviews and case studies, and comparing integration capabilities and scalability.

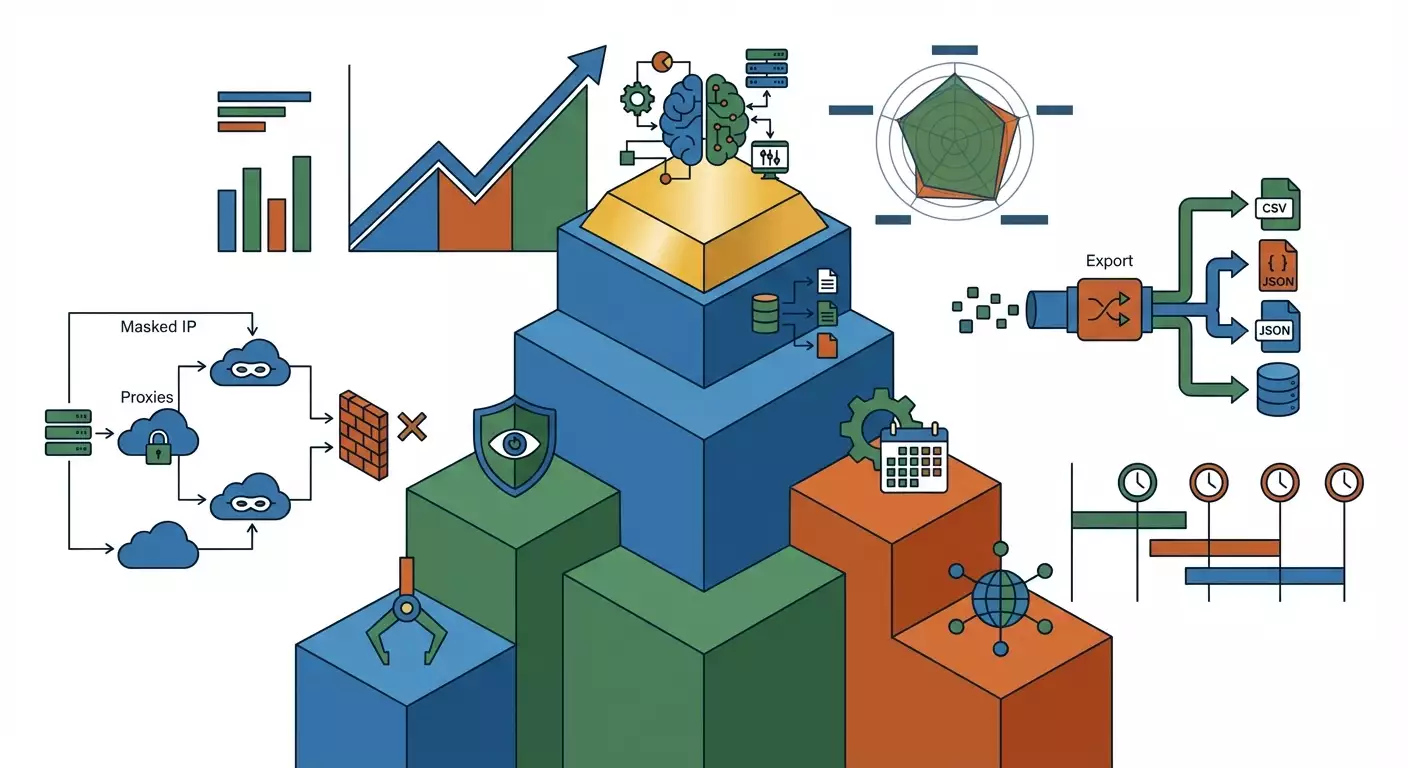

Criteria and weights: 1) Functionality and resistance to anti-bot measures — 30% (availability of a visual constructor, support for JS rendering, handling pagination and dynamic pages, captcha bypass through integrations, stability against Cloudflare and other defenses). 2) Proxy and networking capabilities — 15% (built-in rotation, residential/mobile proxies, BYO proxies). 3) Ease of use and UX — 15% (entry threshold, educational materials, templates, preconfigured bots). 4) Pricing and limits — 15% (cost of plans and transparency of limits per requests/minutes/credits). 5) Speed and scheduling — 10% (parallelism, scheduling, queues). 6) Export and integrations — 10% (CSV, JSON, Google Sheets, API, webhooks, Zapier/Make). 7) Support and feedback — 5% (support channels, knowledge base, review sentiment). The final score is a weighted average across all points.

How information was gathered: we completed onboarding, created typical scenarios (scraping product cards and prices, monitoring SERP, collecting public profiles and posts), tested exports to CSV/JSON and Google Sheets, trialed basic anti-bot scenarios, reviewed official documentation and public FAQs, and typical limits and terms of use. What was not included: closed betas and features not available in standard plans; individual discounts; non-public partnership conditions; as well as scenarios that go beyond ethical and legal data use. Important: adhere to the terms of use of source sites, robots.txt, and local laws.

Criteria for Selection and Comparison

We broke down the selection based on key parameters that any practitioner encounters.

Ease of use: availability of a visual constructor, templates for popular sites, educational tours, reference guides, and video tutorials. We measured by time to first result and number of steps, as well as by the availability of typical templates (Amazon, Google SERP, LinkedIn).

Proxies: built-in rotation, availability of data center and residential IPs, ability to connect mobile proxies, support for external providers. We measured by the availability of options and flexibility in configuration.

Bypassing anti-bot defenses: functioning through real browsers with JS rendering, behavioral delays, emulation, support for captcha solvers, and stability against Cloudflare and similar barriers. We measured by stability in executing tasks on protected sites and the number of retries.

Types of data: HTML, dynamic content, APIs and endpoints, extraction of structured entities (product, news, company), images. Important for versatility and quality.

Export: support for CSV/JSON, tables (Google Sheets), webhooks, REST API, Zapier/Make; we measured the number of available methods and ease of setup.

Pricing and limits: cost of basic and advanced plans, clarity of the model (credits, minutes, tasks), limits on parallel tasks, and availability of a free plan/trial.

Speed and scheduling: parallelism, queues, scheduling with flexible rules (daily, cron, every X minutes), ability to restart on failures.

Thresholds for inclusion in the TOP: cloud operation, availability of no-code scenarios or templates, export to CSV/JSON and Google Sheets or via API, scheduling. All six selected platforms meet these requirements.

#1. Apify — Best Balance of Power, Anti-Bot Resistance, and Scalability

Overview

Apify is a cloud automation and web scraping platform with a vast marketplace of ready-made scrapers (Actors) and infrastructure: queues, storage, proxies, scheduler, webhooks. Founded in 2015, based in the EU. Specialization: large-scale scraping projects, intent-oriented templates (Amazon, SERP, stores, social media), and an ecosystem for teams and BI integration. Target audience: from marketers and analysts who need ready presets and fast starts to advanced users and data departments needing flexibility and reliable proxies.

Key Features

- A large marketplace of ready-made “Actors” for popular sources (Amazon, Google, LinkedIn profiles, company directories, reviews, news).

- Apify's built-in proxies (data center, residential, SERP), flexible rotation, support for external proxies.

- Execution in real browsers, JS rendering, session management, delays, retries; integration with captcha solvers via actors.

- Data and file storage, task queues, logging, monitoring, and alerts.

- Export to CSV/JSON, Google Sheets, API, webhooks; connectors to Zapier/Make.

- Scheduler with flexible scheduling and triggers.

Unique features: a powerful ecosystem of Actors, including commercial and free options; SERP and Amazon scrapers with support for rotation and geo-targeting; preview and trial runs; data residency in the EU; extensive documentation and community.

Technical specifications: high parallelism, customizable limits on memory/time per task, resilience due to retries and queues; support for enterprise features (SSO, audit log, role-based access model).

Integrations: API, webhooks, Google Sheets, Zapier/Make, BigQuery/Databricks through connectors/scripts (for no-code — webhooks or ready integrations suffice).

Pricing and Plans

The model is based on platform credits and execution limits. As of January 2026: Free plan with small monthly credits for testing; Starter around $49/month, Team around $499/month, Business — upon request. Paid proxies (especially residential) are charged separately, depending on the type of IP and traffic volume. A free period and free actors are available to test hypotheses. The price/performance ratio is high due to scalability and available templates: you pay for real power and resistance to blocks.

Advantages

- The strongest ecosystem of ready-made scrapers saves weeks of development.

- Mature proxies and rotation, resistance to anti-bots, flexible configuration.

- A complete stack: queues, storage, scheduler, logging, alerts.

- Flexible export: API, webhooks, CSV/JSON, Google Sheets.

- Professional level for teams and enterprise: roles, SSO, audit log, SLA.

Disadvantages

- Some Actors are paid or have limits, meaning you'll have to optimize your budget.

- For very specific sites, complex parameter tuning may be required.

- Higher learning curve compared to the "easiest" builders.

Best For

Marketers and analysts needing reliable collection from marketplaces and SERPs with a low failure rate; growth teams and data departments running dozens of scheduled tasks; enterprises with high security and scalability requirements.

Assessment by Criteria

- Functionality: 9.5/10

- Price: 8/10

- Usability: 8.5/10

- Support: 8.5/10

- Reviews: 8.5/10

- Overall Rating: 9/10

⭐ Overall Rating: 9.0/10

- Functionality: 9.5/10

- Price: 8/10

- Usability: 8.5/10

- Technical Support: 8.5/10

- User Reviews: 8.5/10

✅ Best For: companies needing scalability, block resistance, and automation from collection to export.

Main Advantage: combination of powerful infrastructure and a rich marketplace of ready-made scrapers.

#2. Octoparse — Easiest Visual Builder for Beginners

Overview

Octoparse is a popular cloud and desktop platform for visual website scraping without code. The company originated in China and has been active in the global market since the mid-2010s. Specialization: clear interface, templates for common scenarios, easy action setup (clicks, pagination, scrolls, input). Target audience: marketers, content specialists, small and medium business owners who need a quick start and minimal learning curve.

Key Features

- Visual builder: point-and-click element selection, auto-generation of XPath/CSS selectors.

- Cloud Extraction: cloud agents perform tasks without the local machine.

- Built-in IP rotation, basic anti-bot settings, user simulation (delays, scroll).

- JS rendering through an embedded browser, working with dynamic pages.

- Export to CSV/JSON, Excel, Google Sheets; API; integrations via Zapier/Make.

- Scheduling: daily/hourly/interval-based.

Unique features: “Smart Mode” for quick auto-assembly of tables from simple pages; a library of templates for popular sites; rich guides and video tutorials.

Technical specifications: stable cloud runner, parallel tasks on paid plans, support for authentication and simple captcha scenarios (via hints and third-party solvers).

Integrations: Google Sheets, Excel, API, Zapier/Make, databases via exports/scripts.

Pricing and Plans

As of January 2026, the typical matrix: Free with speed/volume limitations; Standard around $75/month; Professional around $209/month; Enterprise — upon request. Cloud minutes and parallelism increase with plans. Built-in proxies are included for cloud launches; for local tasks, you can connect external proxies. The price/performance ratio is excellent for beginners and teams without engineering support.

Advantages

- Best visual builder for a quick start.

- Templates and educational materials significantly reduce setup time.

- Cloud agents and built-in IP rotation simplify operation.

- Flexible export and automation via API and Zapier/Make.

- Good for bulk tasks on simple to moderately complex sites.

Disadvantages

- Complex anti-bot sites (rigorous Cloudflare, challenging captchas) may require workarounds and still fail.

- Deeply customized scenarios sometimes hit constraints of the visual flow.

- Optimizing a large flow of tasks requires careful adjustment of schedules and limits.

Best For

Beginners, SMBs, marketers with limited setup time; projects with regular, but not extreme data volumes; scraping catalogs, news, product cards on average sites where speed of implementation is the key.

Assessment by Criteria

- Functionality: 8.5/10

- Price: 8/10

- Usability: 9/10

- Support: 8/10

- Reviews: 8.3/10

- Overall Rating: 8.5/10

⭐ Overall Rating: 8.5/10

- Functionality: 8.5/10

- Price: 8/10

- Usability: 9/10

- Technical Support: 8/10

- User Reviews: 8.3/10

✅ Best For: beginners and teams without developers who need quick no-code results.

Main Advantage: intuitive visual builder and a library of templates.

#3. Browse AI — Fastest Start and Convenient Integrations with Sheets

Overview

Browse AI is a cloud tool where you “train” a robot to record actions and select data on a page, and then you run monitoring and export via integrations. The company focuses on simplicity, speed, and typical business cases: price monitoring, product card tracking, SERPs, lead tables. Target audience: marketers, product analysts, e-commerce owners, and agencies.

Key Features

- Training-by-recording: training the robot with a few clicks.

- Cloud browsers, basic IP rotation; robots can work on a schedule.

- Ready-made recipes for popular sites and tasks.

- Export to Google Sheets “out of the box,” CSV/JSON via API and webhooks.

- Notifications and alerts on data changes.

Unique features: maximum simplicity in launching tasks for a non-technical audience; very fast integration with Google Sheets; convenient management of robots and their statuses.

Technical specifications: cloud queuing and parallelism are limited by the plan; support for authorization, click flows, pagination clicking; basic anti-bot tips. For complex sites, fine-tuning of delays, templates, and alternative paths may be required.

Integrations: Google Sheets, Slack/Email notifications, API and webhooks, Zapier/Make for integration with CRM/BI.

Pricing and Plans

Typical model as of January 2026: Free with limitations (few robots, small limits); Starter around $48/month; Professional around $124/month; Team around $249/month; Enterprise — upon request. Consider the concept of “credits” or “robot launches” and monitoring limits; final cost depends on update frequency and number of pages. The price/performance ratio is very good for tasks with a frequency of 1-4 times a day and moderate volumes.

Advantages

- Super fast start: training the robot takes minutes.

- Ideal integration with Google Sheets and notifications.

- Suits well for paying SMBs and marketing departments.

- Simple recipes for typical tasks (SERP, product cards, price monitoring).

- Automatic retries and alerts on failures.

Disadvantages

- Limited resilience against complex anti-bot systems.

- Less flexible for very customized scenarios compared to Apify-level platforms.

- As monitoring frequency and number of pages grow, the ultimate cost can significantly increase.

Best For

Marketers, analysts, product managers, small and medium e-commerce owners; tasks where speed of launch, ease of maintenance, and clear export to Sheets/API are needed.

Assessment by Criteria

- Functionality: 8/10

- Price: 8.5/10

- Usability: 9.2/10

- Support: 7.8/10

- Reviews: 8.5/10

- Overall Rating: 8.4/10

⭐ Overall Rating: 8.4/10

- Functionality: 8/10

- Price: 8.5/10

- Usability: 9.2/10

- Technical Support: 7.8/10

- User Reviews: 8.5/10

✅ Best For: quickly launching page monitoring and SERPs with exports to Sheets.

Main Advantage: minimal entry barrier and convenient integrations.

#4. PhantomBuster — Best for Social Media and Lead Generation

Overview

PhantomBuster is a cloud automation and scraping platform with a focus on social media and sales funnels. It provides “phantoms” — ready-made bots for LinkedIn, X, Instagram, Facebook, Google, and more. Founded in the late 2010s, it is aimed at global markets. Target audience: SDR/BDR teams, performance marketers, agencies that need to collect and enrich leads from social media, launch outreach, and automate routine actions.

Key Features

- A large library of phantoms for social media and Google: scraping profiles, posts, commenters, followers; messaging and auto actions within permissible limits.

- Cloud browsers, basic IP rotation; support for connecting own proxies.

- Scheduling and action chains; saving sessions for accounts.

- Export to CSV/JSON, Google Sheets; API and webhooks.

- Notifications and anti-ban recommendations (throttling and platform limits).

Unique features: strong specialization in LinkedIn and other social media; combination of scraping and outreach automation; typical pipelines for lead generation.

Technical specifications: limitation on “slots” and execution minutes depending on the plan; support for behavioral emulation; resilience to bans depends on account hygiene and scenarios.

Integrations: Google Sheets, CRM via Zapier/Make and API; notifications.

Pricing and Plans

As of January 2026: Starter around $69/month, Pro around $139/month, Team around $399/month, Enterprise — upon request. The plan dictates the number of phantoms, daily execution limits, and parallelism. Built-in IP rotation exists, but for complex social media cases, BYO proxies and careful action frequency setup are recommended. For its niche — a good price/performance ratio.

Advantages

- Best specialization for social media and lead generation.

- Ready-made phantoms for key LinkedIn/X/Instagram tasks.

- Good integration with tables and CRM via Zapier/Make.

- Simple action chains and campaign planning.

- Support for external proxies for flexibility and resilience.

Disadvantages

- Narrow specialization: as a universal web scraper, it lags behind Apify and Octoparse.

- Risk of blocks on social media with aggressive scenarios.

- Limiting structure (slots/minutes) requires acclimatization.

Best For

Sales teams, marketing agencies, outreach and SMM specialists needing streams of leads from social media and automation of actions within platform rules.

Assessment by Criteria

- Functionality: 8/10

- Price: 7.5/10

- Usability: 8.7/10

- Support: 8/10

- Reviews: 8.2/10

- Overall Rating: 8.1/10

⭐ Overall Rating: 8.1/10

- Functionality: 8/10

- Price: 7.5/10

- Usability: 8.7/10

- Technical Support: 8/10

- User Reviews: 8.2/10

✅ Best For: lead generation from social media and outreach.

Main Advantage: maximum readiness for social media and typical sales processes.

#5. Diffbot — Best for Structured Entities and Semantic Search

Overview

Diffbot is an AI extraction platform with ready models for entities (Article, Product, Organization, etc.) and its own Knowledge Graph. Launched in the early 2010s in the USA. Specialization: automatic extraction of structured data from web pages without selector configuration, full-text and semantic search on the knowledge graph. Target audience: market researchers, corporate analysts, fintech, and large companies where the accuracy of entities and access to the graph are crucial.

Key Features

- Model-based extraction: ready models for news, products, companies, etc.

- Knowledge Graph: access to a vast web data graph via API.

- Crawlbot and automatic extraction, compliant with robots by default.

- JSON responses with entity structure; integration via API.

- Ability for point rules and customization per domains.

Unique features: you get structured data without the need for manual selector training; scale thanks to the Knowledge Graph; powerful search and aggregation on entities.

Technical specifications: cloud infrastructure, high resilience to layout changes due to ML; not aimed at bypassing anti-bot measures "at any cost" — focus on legal collection and the quality of extracted entities.

Integrations: API, connectors through ETL tools, export to data stores through pipelines.

Pricing and Plans

Positioned as a premium solution. As of January 2026, the Starter plan is around $299/month, Plus/Enterprise — significantly more expensive. Pricing depends on request volume, access to KG, and function set. For SMBs, the budget can be substantial; for corporations — it aligns with data value. Often, there are no free plans, but demos/trials may be available.

Advantages

- Ready entities: time savings on settings and resilience to HTML changes.

- Knowledge Graph: access to unique data and relationships.

- High-quality structuring, useful for analytics and research.

- API-first approach, easily integrates into corporate pipelines.

- Less manual support compared to selector-based parsers.

Disadvantages

- High pricing for startups and SMBs.

- Limited flexibility for non-standard sites and “heavy” anti-bot measures.

- API/ML focus — a no-code layer is needed for integrations.

Best For

Medium and large businesses, analytical and research teams for whom ready entities and access to a knowledge graph are important, rather than developing their parsers.

Assessment by Criteria

- Functionality: 8.7/10

- Price: 6/10

- Usability: 7.5/10

- Support: 8/10

- Reviews: 8/10

- Overall Rating: 7.7/10

⭐ Overall Rating: 7.7/10

- Functionality: 8.7/10

- Price: 6/10

- Usability: 7.5/10

- Technical Support: 8/10

- User Reviews: 8/10

✅ Best For: companies valuing ready structured entities and Knowledge Graph access.

Main Advantage: accurate ML extraction without selector configuration.

#6. ParseHub — Reliable Visual Parser for Basic and Intermediate Tasks

Overview

ParseHub is a veteran in visual parsing that combines a desktop builder with cloud launches and result storage. Founded in the mid-2010s in Canada. Specialization: a clear scenario approach (clicks, pagination, list extraction), moderate support for dynamic content. Target audience: users who need a basic visual tool and regular export without complex anti-bot scenarios.

Key Features

- Visual markup of elements, lists, and pagination.

- Cloud launches with result storage.

- JS rendering and handling of simple SPA pages.

- Export to CSV/JSON, Google Sheets via integrations/scripts, API.

- Scheduling and task monitoring.

Unique features: a simple and time-tested interface; educational guides for typical scenarios.

Technical specifications: suitable for small and medium projects; strong anti-bot and complex Cloudflare protections are weak points; requires careful management of delays and retries.

Integrations: API, CSV/JSON, connectors via Zapier/Make.

Pricing and Plans

As of January 2026: Free with limitations; Standard around $189/month; Professional around $499/month; Enterprise — upon request. Limits concern the number of projects, parallel tasks, and speed. The price/performance ratio is justified for conservative scenarios, but complex sites may require another tool.

Advantages

- Time-tested visual approach.

- Sufficient for many basic tasks.

- Cloud launches, API, and export to CSV/JSON.

- Decent documentation and community.

- Suitable for educational purposes and pilot projects.

Disadvantages

- Complex anti-bot sites can be challenging.

- The interface and architecture are less flexible than the leaders.

- The Professional price comparably matches more advanced solutions.

Best For

Beginner users, SMBs, small teams scraping simple sites, catalogs, and pages with minimal protection and not requiring high update frequency.

Assessment by Criteria

- Functionality: 7.5/10

- Price: 7/10

- Usability: 7.8/10

- Support: 7.5/10

- Reviews: 7.6/10

- Overall Rating: 7.5/10

⭐ Overall Rating: 7.5/10

- Functionality: 7.5/10

- Price: 7/10

- Usability: 7.8/10

- Technical Support: 7.5/10

- Reviews: 7.6/10

✅ Best For: small projects and educational tasks.

Main Advantage: a stable basic tool for visual parsing.

Comparison Table

- Apify: strong anti-bot and proxy (data center/residential/SERP), Actors store, API/webhooks/Sheets export, enterprise-level scaling and scheduling. Price: Free, Starter ~ $49, Team ~ $499, Business — individually. Best for marketplaces and SERP at large volumes.

- Octoparse: best visual builder for beginners, cloud agents and IP rotation, export Sheets/API, scheduling. Price: Free, Standard ~ $75, Professional ~ $209. Best for quick no-code starts on simple/moderate sites.

- Browse AI: training robots by recording, quick integrations with Sheets, notifications, API/webhooks. Price: Free, Starter ~ $48, Pro ~ $124, Team ~ $249. Best for regular page and SERP monitoring at low complexity of anti-bots.

- PhantomBuster: phantoms for social media and Google, IP rotation, chains, Sheets/API. Price: Starter ~ $69, Pro ~ $139, Team ~ $399. Best for lead generation on social media.

- Diffbot: ML extraction of entities, Knowledge Graph, API-first. Price: from ~$299/month+. Best for analytics and research where ready entities are vital.

- ParseHub: basic visual parser with cloud launches, CSV/JSON/API. Price: Free, Standard ~ $189, Professional ~ $499. Suitable for simple tasks, but complex anti-bots aren’t its strong suit.

Alternatives Not in the TOP

It’s also worth mentioning a few solutions that are close in functionality but fell short of the leaders in combination of criteria.

- WebScraper.io Cloud: a popular extension with a cloud subscription. Great for educational cases and basic tasks, but anti-bot resilience and scalability lag behind Apify and Octoparse.

- Bright Data Web Scraper: a powerful ecosystem of proxies and tools, but requires more setups for no-code, and prices become noticeable with large volumes.

- Zyte (ex-Scrapinghub) + Automatic Extraction: a strong platform and proxy manager but more targeted at tech teams and custom pipelines.

Recommendations for Selection

- Best for Beginners: Octoparse — the easiest visual constructor plus templates.

- Best for Professionals: Apify — scale, proxies, anti-bot resilience, Actors store.

- Best Value: Browse AI for small/medium volumes with infrequent launches and Sheets.

- Best Functionality: Apify — full stack and depth of settings.

- Best for Small Business: Browse AI or Octoparse (quick launch, user-friendly UX).

- Best for Medium Business: Octoparse or Apify (depending on site complexities and volumes).

- Best for Large Business: Apify or Diffbot (enterprise features, scale, SLA).

Specific scenarios: for marketplaces (Wildberries, Ozon, Amazon) — Apify due to Actors and proxies; Octoparse for simple storefronts and cards without aggressive protection. For SERP — Apify (has ready-made scrapers with geo-targeting), Browse AI — for easy monitoring and Sheets. For social media — PhantomBuster is clearly the best in terms of readiness and automation.

FAQ

Can I scrape marketplaces without blocks?

It's impossible to completely eliminate blocks, but Apify with residential proxies and appropriate delays significantly reduces the risk. Octoparse and Browse AI are suitable for less protected pages. Always follow site terms and usage ethics.

Which platform is better for Wildberries and Ozon?

Apify — due to Actors, flexible proxies, and resilience. For simple cards — Octoparse. Check legal aspects and request frequency.

What to choose for monitoring SERP?

Apify — for ready SERP scrapers and geo; Browse AI — for simplicity and Sheets; PhantomBuster — if you need to combine SERP with subsequent outreach.

Which system is best for social media?

PhantomBuster: ready phantoms, chains, export to CRM. Apify can also do it but will require more setup. Always consider platform rules and use safe limits.

Do I need my own proxies?

Apify and PhantomBuster support BYO proxies; Octoparse — for local tasks; Browse AI and Diffbot generally work with built-in infrastructure. Own proxies are needed in cases of complex anti-bots or specific geos.

How to choose between Octoparse and ParseHub?

Octoparse is more modern visually and friendlier to beginners; ParseHub is a “classic” for basic scenarios. In the case of complex sites, both will fall behind Apify.

How accurate are the prices?

Prices are as of January 2026 based on public sources and typical offerings; check actual terms in your account as promotions and limits may change.

Are there limits on launch frequency?

Yes, all platforms have limits on minutes/launches/credits. Apify and Octoparse scale flexibly; Browse AI and PhantomBuster are limited by the number of robots/phantoms and frequency. Plan schedules and distribute the load.

Can I export directly to BI?

Yes: via API and webhooks, as well as connectors to Zapier/Make and intermediate tables. Apify is the most flexible; Diffbot is API-first; Octoparse and Browse AI work great with Sheets.

How to bypass captchas?

Integrate captcha solvers or lower frequency and increase quality of rotation. Apify offers customizable pipelines and integrations; the others — use delays, schedules, and “human” patterns.

Conclusion

Ranking results: Apify ranks best overall with anti-bot resilience, proxies, Actors ecosystem, scalability, and integrations. Octoparse is best for beginners and SMBs due to its visual builder. Browse AI offers the fastest path for monitoring with exports to Google Sheets. PhantomBuster is the undisputed leader for social media and lead generation. Diffbot is optimal for large analytics tasks with ready entities. ParseHub is a reliable basic tool for simple scenarios.

Recommendations: for marketplaces (Wildberries, Ozon, Amazon) — use Apify; for SERP — Apify or Browse AI; for social media — PhantomBuster; for deep analytical projects and semantics — Diffbot; for quick no-code starts — Octoparse; for educational and basic tasks — ParseHub. Remember the legality and policies of sites; plan loads and utilize proxy rotation.

Trends for 2024-2025, relevant in 2026: the growing role of ready templates and AI assistants in configuring scrapers, convergence of scraping with outreach automation processes and data pipelines, increasing anti-bot defenses, and making bypassing harder without quality IP rotation and behavioral emulation. In the coming years, expect more AI-driven configurations, automatic resilience tips, and “policy-aware” data collection modes that consider legal aspects in advance.

This article was prepared in January 2026. Check current prices and limits in your accounts — providers regularly update their offerings and terms.